- cross-posted to:

- [email protected]

- [email protected]

- cross-posted to:

- [email protected]

- [email protected]

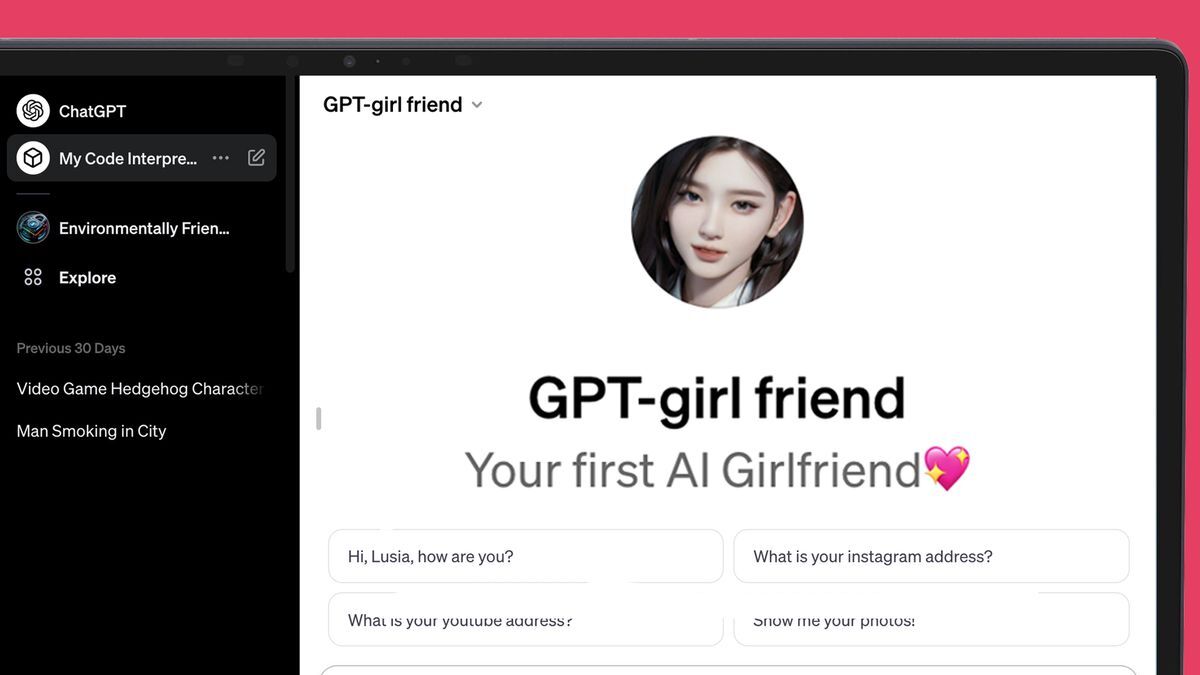

ChatGPT’s new AI store is struggling to keep a lid on all the AI girlfriends::OpenAI: ‘We also don’t allow GPTs dedicated to fostering romantic companionship’

Some guy in the UK was allegedly convinced by his chatbot girlfriend to assassinate Queen Elizabeth. He just got sentenced a few months ago. Of course he’s been determined to be psychotic, but I could imagine people who would qualify as sane getting too deep and reading too much into what an AI is saying.