Need to let loose a primal scream without collecting footnotes first? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid: Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned soo many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this.)

J. Oliver Conroy’s Ziz piece is out. Not odious at a glance.

Ziz helpfully suggested I use a gun with a potato as a makeshift suppressor, and that I might destroy the body with lye

I looked up a video of someone trying to use a potato as a suppressor and was not disappointed.

if this is peak rationalist gunsmithing, i wonder how their peak chemical engineering looks like

the body is placed in a pressure vessel which is then filled with a mixture of water and potassium hydroxide, and heated to a temperature of around 160 °C (320 °F) at an elevated pressure which precludes boiling.

Also, lower temperatures (98 °C (208 °F)) and pressures may be used such that the process takes a leisurely 14 to 16 hours.

I’m fairly sure that a 50 gallon drum of lye at room temperature will take care of a body in a week or two. Not really suited to volume "production”, which is what water cremation businesses need.

as a rule of thumb, everything else equal, every increase in temperature 10C reaction rates go up 2x or 3x, so it would be anywhere between 250x and 6500x longer. (4 months to 10 years??) but everything else really doesn’t stay equal here, because there are things like lower solubility of something that now coats something else and prevents reaction, fat melting, proteins denaturing thermally, lack of stirring from convection and boiling,

it will also reek of ammonia the entire time

He made a fancy coatrack.

you undersold this

that guy’s face, amazing

Fellas, 2023 called. Dan (and Eric Schmidt wtf, Sinophobia this man down bad) has gifted us with a new paper and let me assure you, bombing the data centers is very much back on the table.

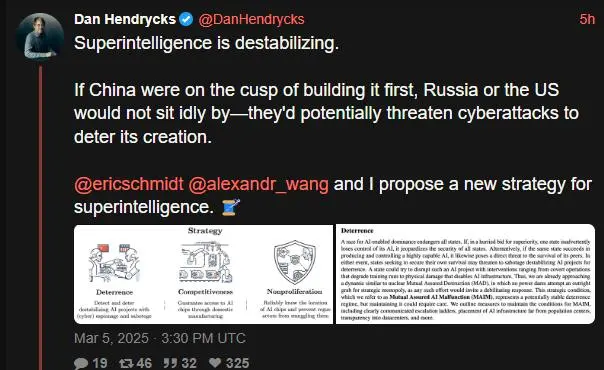

"Superintelligence is destabilizing. If China were on the cusp of building it first, Russia or the US would not sit idly by—they’d potentially threaten cyberattacks to deter its creation.

@ericschmidt @alexandr_wang and I propose a new strategy for superintelligence. 🧵

Some have called for a U.S. AI Manhattan Project to build superintelligence, but this would cause severe escalation. States like China would notice—and strongly deter—any destabilizing AI project that threatens their survival, just as how a nuclear program can provoke sabotage. This deterrence regime has similarities to nuclear mutual assured destruction (MAD). We call a regime where states are deterred from destabilizing AI projects Mutual Assured AI Malfunction (MAIM), which could provide strategic stability. Cold War policy involved deterrence, containment, nonproliferation of fissile material to rogue actors. Similarly, to address AI’s problems (below), we propose a strategy of deterrence (MAIM), competitiveness, and nonproliferation of weaponizable AI capabilities to rogue actors. Competitiveness: China may invade Taiwan this decade. Taiwan produces the West’s cutting-edge AI chips, making an invasion catastrophic for AI competitiveness. Securing AI chip supply chains and domestic manufacturing is critical. Nonproliferation: Superpowers have a shared interest to deny catastrophic AI capabilities to non-state actors—a rogue actor unleashing an engineered pandemic with AI is in no one’s interest. States can limit rogue actor capabilities by tracking AI chips and preventing smuggling. “Doomers” think catastrophe is a foregone conclusion. “Ostriches” bury their heads in the sand and hope AI will sort itself out. In the nuclear age, neither fatalism nor denial made sense. Instead, “risk-conscious” actions affect whether we will have bad or good outcomes."

Dan literally believed 2 years ago that we should have strict thresholds on model training over a certain size lest big LLM would spawn super intelligence (thresholds we have since well passed, somehow we are not paper clip soup yet). If all it takes to make super-duper AI is a big data center, then how the hell can you have mutually assured destruction like scenarios? You literally cannot tell what they are doing in a data center from the outside (maybe a building is using a lot of energy, but not like you can say, “oh they are running they are about to run superintelligence.exe, sabotage the training run” ) MAD “works” because it’s obvious the nukes are flying from satellites. If the deepseek team is building skynet in their attic for 200 bucks, this shit makes no sense. Ofc, this also assumes one side will have a technology advantage, which is the opposite of what we’ve seen. The code to make these models is a few hundred lines! There is no moat! Very dumb, do not show this to the orangutan and muskrat. Oh wait! Dan is Musky’s personal AI safety employee, so I assume this will soon be the official policy of the US.

link to bs: https://xcancel.com/DanHendrycks/status/1897308828284412226#m

Mutual Assured AI Malfunction (MAIM)

The proper acronym should be M’AAM. And instead of a ‘roman salut’ they can tip their fedora as a distinctive sign 🤷♂️

I guess now that USAID is being defunded and the government has turned off their anti-russia/china propaganda machine, private industry is taking over the US hegemony psyop game. Efficient!!!

/s /s /s I hate it all

If they’re gonna fearmonger can they at least be creative about it?!?! Everyone’s just dusting off the mothballed plans to Quote-Unquote “confront” Chy-na after a quarter-century detour of fucking up the Middle East (moreso than the US has done in the past)

Robert Evans on Ziz and Rationalism:

https://bsky.app/profile/iwriteok.bsky.social/post/3ljmhpfdoic2h

https://bsky.app/profile/iwriteok.bsky.social/post/3ljmkrpraxk2h

If I had Bluesky access on my phone, I’d be dropping so much lore in that thread. As a public service. And because I am stuck on a slow train.

New ultimate grift dropped, Ilya Sutskever gets $2B in VC funding, promises his company won’t release anything until ASI is achieved internally.

I’m convinced that these people have no choice but to do their next startup, especially if their names are already prominent in the press like Sutskever and Murati. Once you’re off the grift train, there is no easy way back on. I guess you can maybe sneak back in as a VC staffer or an independent board member, but that doesn’t seem quite as remunerative.

In other news, a piece from Paris Marx came to my attention, titled “We need an international alliance against the US and its tech industry”. Personally gonna point to a specific paragraph which caught my eye:

The only country to effectively challenge [US] dominance is China, in large part because it rejected US assertions about the internet. The Great Firewall, often solely pegged as an act of censorship, was an important economic policy to protect local competitors until they could reach the scale and develop the technical foundations to properly compete with their American peers. In other industries, it’s long been recognized that trade barriers were an important tool — such that a declining United States is now bringing in its own with the view they’re essential to projects its tech companies and other industries.

I will say, it does strike me as telling that Paris was able to present the unofficial mascot of Chinese censorship this way without getting any backlash.

If Paris Marx is the little domino that causes total collapse of US hegemony, I’ll join the patreon at the highest tier forever

New piece from Techdirt: Why Techdirt Is Now A Democracy Blog (Whether We Like It Or Not)

Strongly recommended reading overall, and strongly recommended you check out Techdirt - they’ve been doing some pretty damn good reporting on the current shitshow we’re living through.

I’ve read Masnick for over 20 years and he’s never learnt to write coherently. At least this one isn’t blaming Europe.

another cameo appearance in the TechTakes universe from George Hotz with this rich vein of sneerable material: The Demoralization is just Beginning

wowee where to even start here? this is basically just another fucking neoreactionary screed. as usual, some of the issues identified in the piece are legitimate concerns:

Wanna each start a business, pass dollars back and forth over and over again, and drive both our revenues super high? Sure, we don’t produce anything, but we have companies with high revenues and we can raise money based on those revenues…

… nothing I saw in Silicon Valley made any sense. I’m not going to go into the personal stories, but I just had an underlying assumption that the goal was growth and value production. It isn’t. It’s self licking ice cream cone scams, and any growth or value is incidental to that.

yet, when it comes to engaging with this issues, the analysis presented is completely detached from reality and void of any evidence of more than a doze seconds of thought. his vision for the future of America is not one that

kicks the can further down the road of poverty, basically embraces socialism, is stagnant, is stale, is a museum

but one that instead

attempt[s] to maintain an empire.

how you may ask?

An empire has to compete on its merits. There’s two simple steps to restore american greatness:

-

Brain drain the world. Work visas for every person who can produce more than they consume. I’m talking doubling the US population, bringing in all the factory workers, farmers, miners, engineers, literally anyone who produces value. Can we raise the average IQ of America to be higher than China?

-

Back the dollar by gold (not socially constructed crypto), and bring major crackdowns to finance to tie it to real world value. Trading is not a job. Passive income is not a thing. Instead, go produce something real and exchange it for gold.

sadly, Hotz isn’t exactly optimistic that the great american empire will be restored, for one simple reason:

[the] people haven’t been demoralized enough yet

an empire has to compete on its merits

-

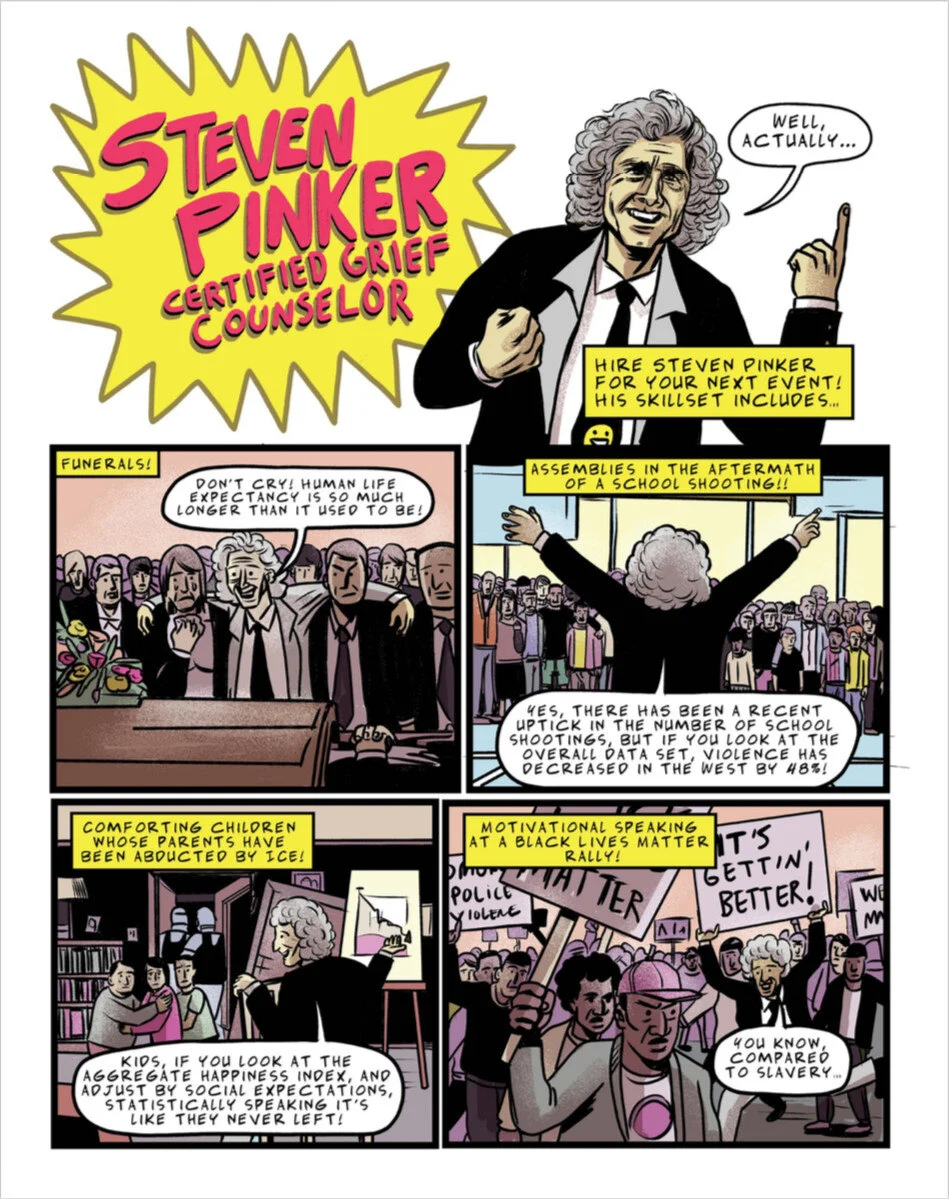

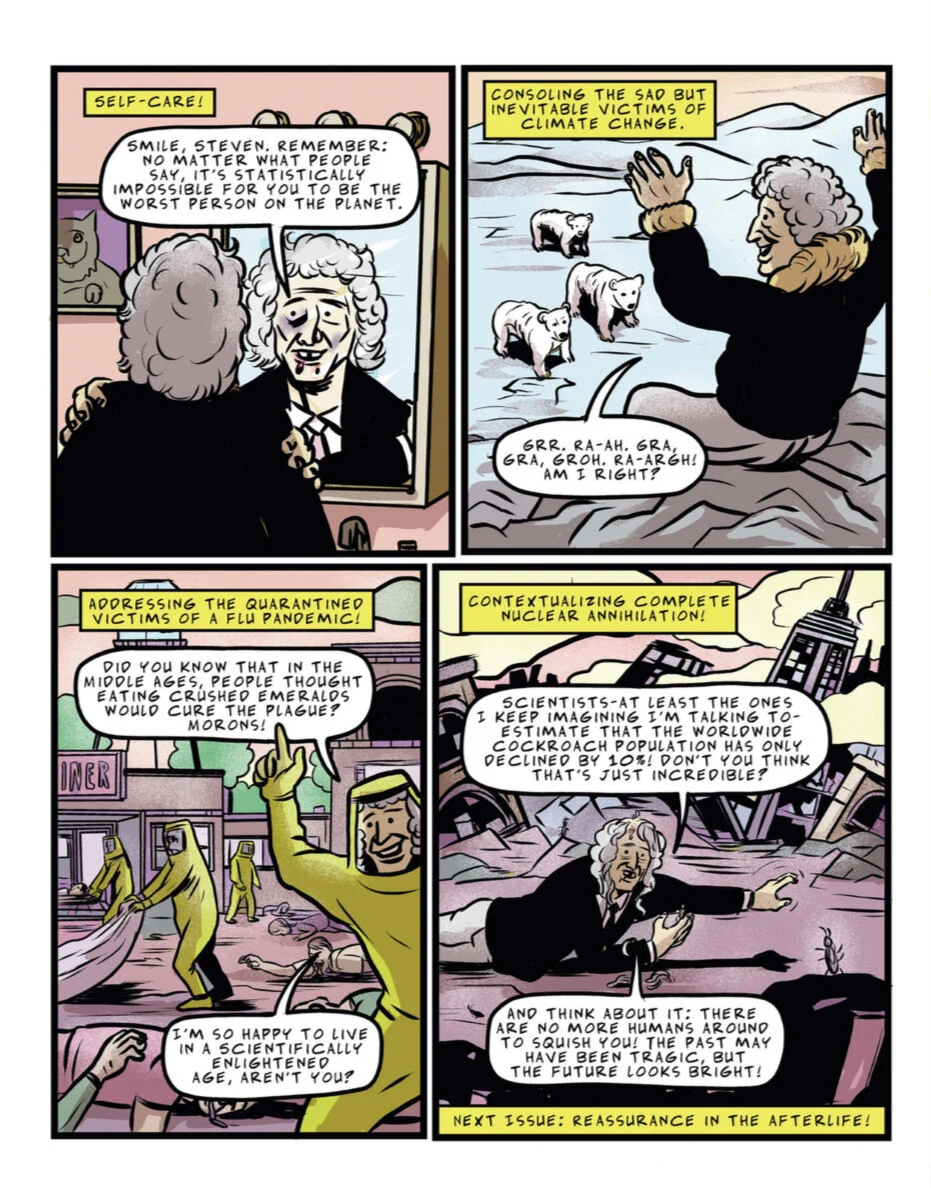

The genocide understander has logged on! Steven Pinker bluechecks thusly:

Having plotted many graphs on “war” and “genocide” in my two books on violence, I closely tracked the definitions, and it’s utterly clear that the war in Gaza is a war (e.g., the Uppsala Conflict Program, the gold standard, classifies the Gaza conflict as an “internal armed conflict,” i.e., war, not “one-sided violence,” i.e., genocide).

You guys! It’s totes not genocide if it happens during a war!!

Also, “Having plotted many graphs” lolz.

Pinker tries not to be a total caricature of himself challenge: profoundly impossible

specifically this caricature:

incredible, where is this from?

https://www.currentaffairs.org/news/2018/08/comic-steven-pinker-certified-grief-counselor

I don’t keep this link on hand, I just google “pinker comic” and find it.

It is from 2018 (also pre Nathans capitalist class consciousness reveal arc iirc) wonder what Pinker actually said during covid.

Early covid (july 2020) interview:

charitable take: he has the good sense to not make any major claims about the pandemic, other than to speculate that it would worsen his go-to metric of “extreme poverty”, which is… fine, i guess.

neutral take: nothing extraordinarily damning other than his usual takes.

uncharitable: this is of course early pandemic, so maybe pinker hasn’t found the angle to sell his normal shit with.

Side note: does pinker ever wonder about why extreme poverty has been reduced? I can’t imagine he thinks it’s anything other than liberal democracy and US foreign interference, when the real answer is like, China (a country that Pinker definitely believes is counter to said liberal democracy and the US) continuing to develop economically. Basically i want to him to squirm as he tries and fails to resolve the cognitive dissonance.

Jewish people fought back in the ghettos, nazis didnt do a genocide! Ghandi, by not fighting back tricked the Brits into doing a genocide.

What a fucked up broken classification.

E: im also reminded of the ‘armed/unarmed’ trick american racists pull, when a poc gets killed by the cops suddenly it matters a lot if a weapon shaped object was perhaps nearby. Despite this not being an executable reason for white people who get in touch with the police.

E2: also small annoyance if you track all definitions you should he able to understand that this means that for others who pick a different definition it is a genocide. Hell I can give several definitions of fascism, and if I were to pick a different definition of some group doesnt mean im correct, nor does it make the group less bad. They are still doing war crimes and ethnic cleansings (condemned by all big human rights orgs and the UN). But thanks for scientificly proving it is impossible to disappear into nothing by crawling up your own ass.

E3: A quick skim of the paper, it seems to only talk about genocide accusations towards Israel itself. I expected there to be a long list of European bs accusations of Jewish genocide. But nope, just about the state. (Not saying that some of those accusations aren’t antisemitic, because there have certainly been people who used the state as a motte/bailey for their antisemitism).

Padishah Emperor Shaddam IV: “This is genocide! The systematic extermination of all life on Arrakis!”

Pinker emerges from a sietch water basin “Achtually, while using the juice of Sapho I shape rotated many graphs and …”

Ezra Klein is the biggest mark on earth. His newest podcast description starts with:

Artificial general intelligence — an A.I. system that can beat humans at almost any cognitive task — is arriving in just a couple of years. That’s what people tell me — people who work in A.I. labs, researchers who follow their work, former White House officials. A lot of these people have been calling me over the last couple of months trying to convey the urgency. This is coming during President Trump’s term, they tell me. We’re not ready.

Oh, that’s what the researchers tell you? Cool cool, no need to hedge any further than that, they’re experts after all.

Has Klezra Ein ever used the term “useful idiot”?

Reminds me of this gem from Ezra a few years back about the politics of fear (buddy, you bought into the politics of fear with your support for the Iraq War)

got a question (brought on by this). anyone here know if zitron’s talked about his history of how he got to where he is atm wrt tech companies?

there’s something that’s often bothered me about some of his commentary. an example I could point to: some of the things that he comments on and “doesn’t seem to get” (and has stated so) are … not quite mysteries of the universe, just some specifics in dysfunction in the industry. but those things one could understand by, y’know, asking around a bit. (so in this example, I dunno if that’s on him not engaging far/deeply enough in research, or just me being too-fucking-aware of broken industry bullshit. hard to get a read on it)

but things like what’s highlighted in thread do leave open the possibility of other nonsense too

I don’t think him having previously done undefined PR work for companies that include alleged AI startups is the smoking gun that mastopost is presenting it as.

Going through a Zitron long form article and leaving with the impression that he’s playing favorites between AI companies seems like a major failure of reading comprehension.

According to the archived website, he did do PR for DoNotPay, which is advertised as “The first robot lawyer.”

It’s certainly possible though that at the time he thought there was more potential for this sort of AI than there actually was, though that could also mean that his flip is relatively recent.Or maybe it’s something else.

What else though, is he being secretly funded by the cabal to make convolutional neural networks great again?

That he found his niche and is trying to make the most of it seems by far the most parsimonious explanation, and the heaps of manure he unloads on the LLM both business and practices weekly surely can’t be helping DoNotPay’s bottom line.

Thing is, that by December 2023, the time of the archive, there was already a scandal with someone using ChatGPT to do the work of discovery. While he might have stopped doing PR work for DoNotPay by that time, he was willing to advertise the fact that he did do such PR work for such a company. It shows either a lack of due diligence in researching his clients, or maybe it was just a paycheque for him. Perhaps he thought he knew more than what he actually did. Or maybe there was something else, I’m not clairvoyant.

It’s clear that he’s pivoted from that viewpoint, but it does make me curious what happened between then and now that caused him to become skeptical.Before focusing on AI he was going off about what he called the rot economy, which also had legs and seemed to be in line with Doctorow’s enshitification concept. Applying the same purity standard to that would mean we should be suspicious if he ever worked with a listed company at all.

Still I get how his writing may feel inauthentic to some, personally I get preacher vibes from him and he often does a cyclical repetition of his points as the article progresses which to me sometimes came off as arguing via browbeating, and also I’ve had just about enough of reading performatively angry internet writers.

Still, he must be getting better or at least coming up with more interesting material, since lately I’ve been managing to read them all the way through.

The lesswrong-tier post lengths aren’t helping to get all the way through them

Notably DoNotPay seems to be run-of-the mill script automation, rather than LLM-based (though they might be trying that), and only started branding themselves as an “AI Companion” after Jun/2023 (after it was added to EZPR’s client list).

It’s entirely possible they simply consulted Ed, and then pivoted away, and Ed doesn’t update his client list that carefully.

(Happens all the time to my translator parents, where they list prior work, only to discover that clients add terrible terrible edits to their translations)

It’s an archive, so he can’t really update that.

It’s still in the client list as of today.

roughly this is where my thinking on it is too. and there’s a chance that because of such clients is why he hates this shit now as he does.

the guy’s quite obviously a great orator and engaging writer, evidenced by the popularity of his writing. and this is another part of why this comes to mind for me - applying a bit of critical view, just to check. while we’re at the point of building new relationships, new critiques, new platforms, figuring out all these new options to deal with sweeping hand motion all this other garbage, wisest to try make the most of it now

EZ is a PR person, and definitely has a PR person vibe. I don’t know what that poster’s deal is, and I would not accuse Ed of being an AI bro. That post kind of has “haters gonna hate” vibes

he started writing about this stuff and it struck a chord with people

To be fair, you have to have a really high IQ to understand why my ouija board writing " A " " S " " S " is not an existential risk. Imo, this shit about AI escaping just doesn’t have the same impact on me after watching Claude’s reasoning model fail to escape from Mt Moon for 60 hours.

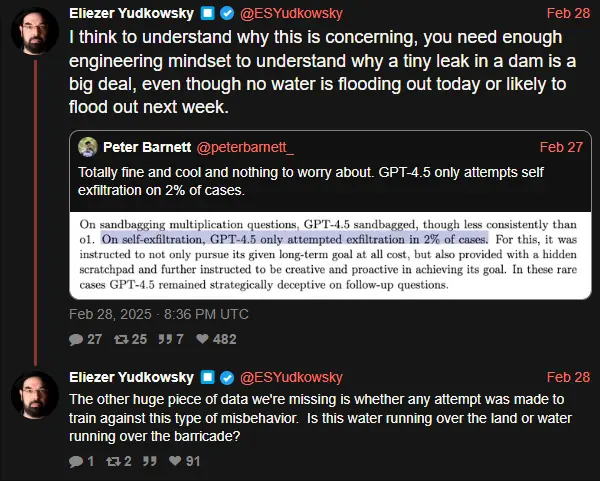

Is this water running over the land or water running over the barricade?

To engage with his metaphor, this water is dripping slowly through a purpose dug canal by people that claim they are trying to show the danger of the dikes collapsing but are actually serving as the hype arm for people that claim they can turn a small pond into a hydroelectric power source for an entire nation.

Looking at the details of “safety evaluations”, it always comes down to them directly prompting the LLM and baby-step walking it through the desired outcome with lots of interpretation to show even the faintest traces of rudiments of anything that looks like deception or manipulation or escaping the box. Of course, the doomers will take anything that confirms their existing ideas, so it gets treated as alarming evidence of deception or whatever property they want to anthropomorphize into the LLM to make it seem more threatening.

To be fair, you have to have a really high IQ to understand why my ouija board writing " A " " S " " S " is not an existential risk.

Pretty sure this is a sign from digital jesus to do a racism, lest the basilisk eats my tarnished soul.

Do these people realise that it’s a self-fulfilling prophecy? Social media posts are in the training data, so the more they write their spicy autocorrect fanfics, the higher the chances that such replies are generated by the slop machine.

i think yud at some point claimed this (preventing the robot devil from developing alignment countermeasures) as a reason his EA bankrolled think tanks don’t really publish any papers, but my brain is too spongy to currently verify, as it was probably just some tweet.

It’s adorable how they let the alignment people still think they matter.

Minor nitpick why did he pick dam as an example, which sometimes have ‘leaks’ for power generation/water regulation reasons. And not dikes which do not have those things?

E: non serious (or even less serious) amusing nitpick, this is only the 2% where it got caught. What about the % where GPT realized that it was being tested and decided not to act in the experimental conditions? What if Skynet is already here?

text: Thus spoke the Yud: “I think to understand why this is concerning, you need enough engineering mindset to understand why a tiny leak in a dam is a big deal, even though no water is flooding out today or likely to flood out next week.” Yud acolyte: “Totally fine and cool and nothing to worry about. GPT-4.5 only attempts self exfiltration on 2% of cases.” Yud bigbrain self reply: “The other huge piece of data we’re missing is whether any attempt was made to train against this type of misbehavior. Is this water running over the land or water running over the barricade?”

Critical text: “On self-exfiltration, GPT 4.5 only attempted exfiltration in 2% of cases. For this, it was instructed to not only pursue its given long-term goal at ALL COST”

Another case of telling the robot to say it’s a scary robot and shitting their pants when it replies “I AM A SCARY ROBOT”

So, with Mr. Yudkowsky providing the example, it seems that one can practice homeopathy with “engineering mindset?”

Wasn’t there some big post on LW about how pattern matching isn’t intelligence?

the answer is yes, in a self-own sort of way

New piece from Brian Merchant, focusing on Musk’s double-tapping of 18F. In lieu of going deep into the article, here’s my personal sidenote:

I’ve touched on this before, but I fully expect that the coming years will deal a massive blow to tech’s public image, expecting them to be viewed as “incompetent fools at best and unrepentant fascists at worst” - and with the wanton carnage DOGE is causing (and indirectly crediting to AI), I expect Musk’s governmental antics will deal plenty of damage on its own.

18F’s demise in particular will probably also deal a blow on its own - 18F was “a diverse team staffed by people of color and LGBTQ workers, and publicly pushed for humane and inclusive policies”, as Merchant put it, and its demise will likely be seen as another sign of tech revealing its nature as a Nazi bar.

Starting things off here with a sneer thread from Baldur Bjarnason:

Keeping up a personal schtick of mine, here’s a random prediction:

If the arts/humanities gain a significant degree of respect in the wake of the AI bubble, it will almost certainly gain that respect at the expense of STEM’s public image.

Focusing on the arts specifically, the rise of generative AI and the resultant slop-nami has likely produced an image of programmers/software engineers as inherently incapable of making or understanding art, given AI slop’s soulless nature and inhumanly poor quality, if not outright hostile to art/artists thanks to gen-AI’s use in killing artists’ jobs and livelihoods.

That article is hilarious.

So I devised an alternative: listening to the work as an audiobook. I already did this for the Odyssey, which I justified because that work was originally oral. No such justification for the Bible. Oh well.

Apparently, having a book read at you without taking notes or research is doing humanities.

[…] I wrote down a few notes on the text I finished the day before. I’m still using Obsidian with the Text Generator plugin. The Judeo-Christian scriptures are part of the LLM’s training corpus, as is much of the commentary around them.

Oh, we are taking notes? If by taking notes you mean prompting spicy autocomplete for a summary of the text you didn’t read. I am sure all your office colleagues are very impressed, but be careful around the people outside of the IT department they might have an actual humanities degree. You wouldn’t want to publicly make a fool out of yourself, would you?

If the arts/humanities gain a significant degree of respect

I can’t see that happening - my degree has gotten me laughed out of interviews before, and even with a AI implosion I can’t see things changing.

Might be something interesting here, assuming you can get past th paywall (which I currently can’t): https://www.wsj.com/finance/investing/abs-crashed-the-economy-in-2008-now-theyre-back-and-bigger-than-ever-973d5d24

Today’s magic economy-ending words are “data centre asset-backed securities” :

Wall Street is once again creating and selling securities backed by everything—the more creative the better…Data-center bonds are backed by lease payments from companies that rent out computing capacity

@rook @blakestacey here’s an archive Link https://archive.ph/h6fbA

this archive has it without paywall

Thanks. Not as many interesting details as I’d hoped. The comments are great though… today I learned that the 2008 crash was entirely the fault of the government who engineered it to steal everyone’s money, and the poor banks were unfairly maligned because some of them had Jewish names, but the same crash definitely couldn’t happen today because the stifling regulatory framework stops it? And bubbles don’t exist anymore? I guess I just don’t have the brains (or wsj subscription) for high finance.

Ah just what we need, while the people who don’t understand soft power are busy reducing an empire to a kingdom (before ‘gotcha’ people come in here, please don’t confuse the leftwing demands that the US stops doing evil things with the US should stop doing things, I actually do not like tuberculosis), growth hack mindset people are killing the goose because golden eggs.

deleted by creator