First and foremost, the dunce is incapable of valuing knowledge that they don’t personally understand or agree with. If they don’t know something, then that thing clearly isn’t worth knowing.

There is a corollary to this that I’ve seen as well, and it dovetails with the way so many of these guys get obsessed with IQ. Anything they can’t immediately understand must be nonsense not worth knowing. Anything they can understand (or think they understand) that you don’t is clearly an arcane secret of the universe that they can only grasp because of their innate superiority. I think that this is the combination that explains how so many of these dunces believe themselves to be the ubermensch who must exercise authoritarian power over the rest of us for the good of everyone.

See also the commenter(s) on this thread who insist that their lack of reading comprehension is evidence that they’re clearly correct and are in no way part of the problem.

@YourNetworkIsHaunted I honestly was wondering why they were obsessing over IQ so much, but this comment actually made it all click

in response to Bender pointing out that ChatGPT and its competitors simply encode relationships between words and have no concept of referent or meaning, which is a devastating critique of what the technology actually does, the absolute best response he can muster for his work is “yeah, but humans don’t do anything more complicated than that”. I mean, speak for yourself Sam: the rest of us have some concept of semiotics, and we can do things like identify anagrams or count the number of letters in a word, which requires a level of recursivity that’s beyond what ChatGPT can muster.

Boom Shanka (emphasis added)

‘i am a stochastic parrot and so are u’

reminds me of

“In his desperation to have produced reality through computation, he denigrates actual reality by equating it to computation”

(from this review/analysis of the devs series). A pattern annoying common among the LLM AI fans.

E: Wow, I did not like the reactionary great man theory spin this article took there. Don’t think replacing the Altmans with Yarvins would be a big solution there. (At least that is how the NRx people would read this article). Quite a lot of the ‘we need more well read renaissance men’ people turned into hardcore trump supporters (and racists, and sexists and…). (Note this edit is after I already got 45 upvotes).

I’m glad I’m not the only one who picked up on that turn. The implication that what we need is an actual Bismark instead of a wannabe like we keep getting makes sense (I too would prefer if the levers of power were wielded by someone halfway competent who listens to and cares about people around them) but there are also some pretty strong reasons why we went from Bismark and Lincoln to Merkel and Trump, and also some pretty strong reasons why the road there led through Hitler and Wilson.

Along with my comments elsewhere about how the dunce believes their area of hypothetical expertise to be some kind of arcane gift revealed to the worthy, I feel like I should clarify that not only do the current top of dolts not have it but that there is no secret wisdom beyond the ken of normal men. That is a lie told by the powerful to stop you fro tom questioning their position; it’s the “because I’m your Dad and I said so” for adults. Learning things is hard and hard means expensive, so people with wealth and power have more opportunities to study things, but that lack of opportunity is not the same as lacking the ability to understand things and to contribute to a truly democratic process.

someone sent out the batpromptfondler signal and the mods are in shooting gallery mode

please refrain from commenting unaccordingly

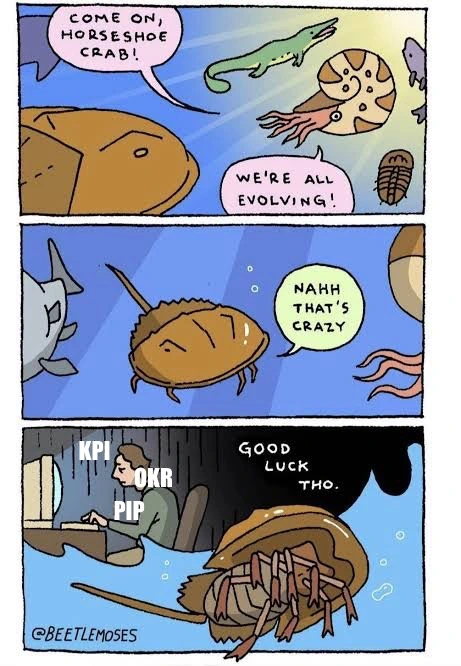

b-but David, they’ve been so reasonable and here we are getting emotional about the fucking garbage technology they’ve come here to shove down our throats alongside a heaping serving of capitalist brainrot from the same types of self-described geniuses who gave us OKRs

MRW 38 of the 39 comments have almost nothing to do with the article

That website only works in private mode on Firefox for me, and even then some pages display different things than it is saying it will. It feels like an easter egg almost, does someone have more info about this group?

u wot? works fine in Firefox here. Try this archive or this archive.

I think our comments just crossed each other, read my follow up. I think it was an issue with the site at that specific moment (500 error) instead of the site being quirky

same here, works fine in Fx (133, aarch64)

Privacy Browser with JS off (by default) can read the article and navigate, only minor eye-sore are the buttons at the top of the site which are on a transparent background and stay on top of the text as I scroll down

hmm maybe it was just a temporary issue. I was getting a 500 error while still seeing part of the site, and the about page had some pseudocode on it that I thought was intentional, but maybe it was just being a bit buggy because now it seems fine.

The blog post itself is an interesting read bytheway, forgot to mention that in my curiosity for interesting web pages

After all, there’s almost nothing that ChatGPT is actually useful for.

It’s takes like this that just discredit the rest of the text.

You can dislike LLM AI for its environmental impact or questionable interpretation of fair use when it comes to intellectual property. But pretending it’s actually useless just makes someone seem like they aren’t dissimilar to a Drama YouTuber jumping in on whatever the latest on-trend thing to hate is.

“Almost nothing” is not the same as “actually useless”. The former is saying the applications are limited, which is true.

LLMs are fine for fictional interactions, as in things that appear to be real but aren’t. They suck at anything that involves being reliably factual, which is most things including all the stupid places LLMs and other AI are being jammed in to despite being consistely wrong, which tech bros love to call hallucinations.

They have LIMITED applications, but are being implemented as useful for everything.

To be honest, as someone who’s very interested in computer generated text and poetry and the like, I find generic LLMs far less interesting than more traditional markov chains because they’re too good at reproducing clichés at the exclusion of anything surprising or whimsical. So I don’t think they’re very good for the unfactual either. Probably a homegrown neural network would have better results.

I’m in the same boat. Markov chains are a lot of fun, but LLMs are way too formulaic. It’s one of those things where AI bros will go, “Look, it’s so good at poetry!!” but they have no taste and can’t even tell that it sucks; LLMs just generate ABAB poems and getting anything else is like pulling teeth. It’s a little more garbled and broken, but the output from a MCG is a lot more interesting in my experience. Interesting content that’s a little rough around the edges always wins over smooth, featureless AI slop in my book.

slight tangent: I was interested in seeing how they’d work for open-ended text adventures a few years ago (back around GPT2 and when AI Dungeon was launched), but the mystique did not last very long. Their output is awfully formulaic, and that has not changed at all in the years since. (of course, the tech optimist-goodthink way of thinking about this is “small LLMs are really good at creative writing for their size!”)

I don’t think most people can even tell the difference between a lot of these models. There was a snake oil LLM (more snake oil than usual) called Reflection 70b, and people could not tell it was a placebo. They thought it was higher quality and invented reasons why that had to be true.

Like other comments, I was also initially surprised. But I think the gains are both real and easy to understand where the improvements are coming from. [ . . . ]

I had a similar idea, interesting to see that it actually works. [ . . . ]

I think that’s cool, if you use a regular system prompt it behaves like regular llama-70b. (??!!!)

It’s the first time I’ve used a local model and did [not] just say wow this is neat, or that was impressive, but rather, wow, this is finally good enough for business settings (at least for my needs). I’m very excited to keep pushing on it. Llama 3.1 failed miserably, as did any other model I tried.

For story telling or creative writing, I would rather have the more interesting broken english output of a Markov chain generator, or maybe a tarot deck or D100 table. Markov chains are also genuinely great for random name generators. I’ve actually laughed at Markov chains before with friends when we throw a group chat into one and see what comes out. I can’t imagine ever getting something like that from an LLM.

GPT-2 was peak LLM because it was bad enough to be interesting, it was all downhill from there

Absolutely, every single one of these tools has got less interesting as they refine it so it can only output the platonic ideal of kitsch.

Agreed, our chat server ran a Markov chain bot for fun.

In comparison to ChatGPT on a 2nd server I frequent it had much funnier and random responses.

ChatGPT tends to just agree with whatever it chose to respond to.

As for real world use. ChatGPT 90% of the time produces the wrong answer. I’ve enjoyed Circuit AI however. While it also produces incorrect responses, it shares its sources so I can more easily get the right answer.

All I really want from a chatbot is a gremlin that finds the hard things to Google on my behalf.

It’s useful insofar as you can accommodate its fundamental flaw of randomly making stuff the fuck up, say by having a qualified expert constantly combing its output instead of doing original work, and don’t mind putting your name on low quality derivative slop in the first place.

Let’s be real here: when people hear the word AI or LLM they don’t think of any of the applications of ML that you might slap the label “potentially useful” on (notwithstanding the fact that many of them also are in a all-that-glitters-is-not-gold–kinda situation). The first thing that comes to mind for almost everyone is shitty autoplag like ChatGPT which is also what the author explicitly mentions.

I’m saying ChatGPT is not useless.

I’m a senior software engineer and I make use of it several times a week either directly or via things built on top of it. Yes you can’t trust it will be perfect, but I can’t trust a junior engineer to be perfect either—code review is something I’ve done long before AI and will continue to do long into the future.

I empirically work quicker with it than without and the engineers I know who are still avoiding it work noticeably slower. If it was useless this would not be the case.

I’m a senior software engineer

ah, a señor software engineer. excusé-moi monsoir, let me back up and try once more to respect your opinion

uh, wait:

but I can’t trust a junior engineer to be perfect either

whoops no, sorry, can’t do it.

jesus fuck I hope the poor bastards that are under you find some other place real soon, you sound like a godawful leader

and the engineers I know who are still avoiding it work noticeably slower

yep yep! as we all know, velocity is all that matters! crank that handle, produce those features! the factory must flow!!

fucking christ almighty. step away from the keyboard. go become a logger instead. your opinions (and/or the shit you’re saying) is a big part of everything that’s wrong with industry.

and the engineers I know who are still avoiding it work noticeably slower

yep yep! as we all know, velocity is all that matters! crank that handle, produce those features! the factory must flow!!

and you fucking know what? it’s not even just me being a snide motherfucker, this rant is literally fucking supported by data:

The survey found that 75.9% of respondents (of roughly 3,000* people surveyed) are relying on AI for at least part of their job responsibilities, with code writing, summarizing information, code explanation, code optimization, and documentation taking the top five types of tasks that rely on AI assistance. Furthermore, 75% of respondents reported productivity gains from using AI.

…

As we just discussed in the above findings, roughly 75% of people report using AI as part of their jobs and report that AI makes them more productive.

And yet, in this same survey we get these findings:

if AI adoption increases by 25%, time spent doing valuable work is estimated to decrease 2.6% if AI adoption increases by 25%, estimated throughput delivery is expected to decrease by 1.5% if AI adoption increases by 25%, estimated delivery stability is expected to decrease by 7.2%

and that’s a report sponsored and managed right from the fucking lying cloud company, no less. a report they sponsor, run, manage, and publish is openly admitting this shit. that is how much this shit doesn’t fucking work the way you sell it to be doing.

but no, we should trust your driveby bullshit. motherfucker.

Lol, using a survey to try and claim that your argument is “supported by data”.

Of course the people who use Big Autocorrect think it’s useful, they’re still using it. You’ve produced a tautology and haven’t even noticed. XD

it may be a shock to learn this, but asking people things is how you find things out from them

I know it requires speaking to humans, alas, c’est la vie

It may be a shock to learn this, but asking people things is how you find out what they think, not what is true.

I know proof requires more than just speaking to humans, alas, c’est la vie.

christ, did someone fire up the Batpromptfondler signal

Please, señor software engineer was my father. Call me Bob.

snrk

Thank you for saving me the breath to shit on that person’s attitude :)

yw

these arseslugs are so fucking tedious, and for almost 2 decades they’ve been dragging everything and everyone around them down to their level instead of finding some spine and getting better

word. When I hear someone say “I’m a SW developer and LLM xy helps me in my work” I always have to stop myself from being socially unacceptably open about my thoughts on their skillset.

and that’s the pernicious bit: it’s not just their skillset, it also goes right to their fucking respect for their team. “I don’t care about just barfing some shit into the codebase, and I don’t think my team will mind either!”

utter goddamn clownery

let me back up and try once more to respect your opinion

The point of me saying that was to imply I’ve been in the industry for a couple of decades, and have a good amount of experience from before all this. It wasn’t any kind of appeal to authority, but I can see how you can read it that way.

jesus fuck I hope the poor bastards that under you find some other place real soon, you sound like a godawful leader

I’m sorry, do you trust junior engineers blindly? That’s gonna lead to a much worse outcome than if they get feedback when they do something wrong. Frankly, I don’t trust any engineer to be perfect, we’re humans and humans make mistakes, that’s why we do code review as a fundamental skill in this industry. It’s one of the primary ways for people to develop their ability.

yep yep! as we all know, velocity is all that matters! crank that handle, produce those features! the factory must flow!!

In an industry where many companies are tightening the belt, yes it’s important to perform well—I kinda want to keep my job and ideally get a good bonus. It would be pretty foolish to leave free productivity on the table when the alternative is working harder to bridge the gap, where I could spend that energy doing more productive stuff.

I’m sorry, do you trust junior engineers blindly?

as a starting position, fucking YES. you know why I hired that person? because I believe they can do the job and grow in it. you know what happens if they make a mistake? I give them all the goddamn backup they need to handle it and grow.

“this is why code review is so important” jfc. you’re one of those “I’ve worked here for 4 years and I’m a senior” types, aren’t you

@froztbyte @9point6 There’s a distinct difference between “I have twenty years of experience” and “I’ve had the same ten minutes of experience over and over again, over a twenty year period” 🤷

Oh jesus christ now I get it.

Thank you. This single sentence explains to me how the fuck those people are able to exist for 20 years and still be so shit at their job.

yep. on topic of which, this excellent post

So you don’t do code review? Something that’s pretty much industry standard?

What on earth do you work on where it’s inconsequential to trust someone new to the industry blindly?

If I could trust someone anything remotely close to “blindly”, they absolutely would not have been hired as a junior.

yep yep. no code review. no version control either. that’s weak shit only babies use. over here you deploy patches by live editing app memory in production, and you update the codebase by editing the central repo using vscode remote. everyone has access to it because monorepos are what google do and so do we.

you have a 100% correct comprehension takeaway of what I said, well done!

jfc no wonder you’re fine with LLMs

I, for one, am not in the industry and can’t figure out why people are coming at you with guns blazing. 🙄

I kinda want to keep my job and ideally get a good bonus.

fuck you

“I just want to be a cog in the machiiiiiiine why are you bringing up these things that make me think?! ew ethics and integrity are so hard”

Oh my god, an actual senior softeare engineer??? Amidst all of us mortals??

Senior software engineerprogrammer here. I have had to tell coworkers “don’t trust anything chat-gpt tells you about text encoding” after it made something up about text encoding.ah but did you tell them in CP437 or something fancy (like any text encoding after 1996)? 🤨🤨🥹

Sadly all my best text encoding stories would make me identifiable to coworkers so I can’t share them here. Because there’s been some funny stuff over the years. Wait where did I go wrong that I have multiple text encoding stories?

That said I mostly just deal with normal stuff like UTF-8, UTF-16, Latin1, and ASCII.

My favourite was a junior dev who was like, “when I read from this input file the data is weirdly mangled and unreadable so as the first processing step I’ll just remove all null bytes, which seems to leave me with ASCII text.”

(It was UTF-16.)

You’ve got to make sure you’re not over-specializing. I’d recommend trying to roll your own time zone library next.

I’m a senior software engineer

Nice, me too, and whenever some tech-brained C-suite bozo tries to mansplain to me why LLMs will make me more efficient, I smile, nod politely, and move on, because at this point I don’t think I can make the case that pasting AI slop into prod is objectively a worse idea than pasting Stack Overflow answers into prod.

At the end of the day, if I want to insert a snippet (which I don’t have to double-check, mind you), auto-format my code, or organize my imports, which are all things I might use ChatGPT for if I didn’t mind all the other baggage that comes along with it, Emacs (or Vim, if you swing that way) does this just fine and has done so for over 20 years.

I empirically work quicker with it than without and the engineers I know who are still avoiding it work noticeably slower.

If LOC/min or a similar metric is used to measure efficiency at your company, I am genuinely sorry.

I agree with you on the examples listed, there are much better tools than an LLM for that. And I agree no one should be copy and pasting without consideration, that’s a misuse of these tools.

I’d say my main uses are kicking off a new test suite (obviously you need to go and check the assertions are what you expect, but it’s usually about 95% there) which has gone from a decent percentage of the work for a feature down to an almost negligible amount of time. This one also results in me enjoying my job a bit more now too as I’ve always found writing tests a bit of a drudgery.

The other big use for me is that my organisation is pretty big and has a hefty amount of code (a good couple of thousand repos at least), we have a tool that’s based on GPT which has processed all the code, so you can now ask queries about internal stuff that may not be well documented or particularly obvious. This one saves a load of time because I now don’t always have to do the Slack merry go round to try and find an engineer that knows about what I’m looking for—sometimes it’s still unavoidable, but they’re less frequent moments now.

If LOC/min or a similar metric is used to measure efficiency at your company, I am genuinely sorry.

It’s tied to OKR completion, which is generally based around delivery. If you deliver more feature work, it generally means your team’s scores will be higher and assuming your manager is aware of your contributions, that translates to a bigger bonus. It’s more of a carrot than a stick situation IMO, I could work less hard if I didn’t want the extra money.

It’s tied to OKR completion, which is generally based around delivery. If you deliver more feature work, it generally means your team’s scores will be higher and assuming your manager is aware of your contributions, that translates to a bigger bonus.

holy fuck. you’re so FAANG-brained I’m willing to bet you dream about sending junior engineers to the fulfillment warehouse to break their backs

motherfucking, “i unironically love OKRs and slurping raises out of management if they notice I’ve been sleeping under my desk again to get features in” do they make guys like you in a factory? does meeting fucking normal software engineers always end like it did in this thread? will you ever realize how fucking embarrassing it is to throw around your job title like this? you depressing little fucker.

gilding the lily a bit but

I worked at one of the biggest AI companies and their internal AI question/answer was dogshit for anything that could be answered by someone with a single fold in their brain. Maybe your co has a much better one, but like most others, I’m gonna go with the smooth brain hypothesis here.

I don’t know how or why you’re getting lambasted. You make excellent points and ever making outlandish claims, just a common sense approach.

I’m a senior software engineer

Good. Thanks for telling us your opinion’s worthless.

Another professional here. Lemmy really isn’t a place where you’re going to find people listening to what you have to say and critically examining their existing positions. You’re right, and you’re going to get downvoted for it.

In this and other use cases I call it a pretty effective search engine, instead of scrolling through stackexchange after clicking between google ads, you get the cleaned up example code you needed. Not a Chat with any intelligence though.

That ChatGPT can be more useful than a web search is really more indicative of how bad the web has got, and can only get worse as fake text invades it. It’s not actually better than a functional search engine and a functional web, but the companies making these things have no interest in the web being usable. Pretty depressing.

Remember when you could read through all the search results on Google rather than being limited to the first hundred or so results like today? And boolean search operators actually worked and weren’t hidden away behind a “beware of leopard” sign? Pepperidge Farm remembers.

“despite the many people who have shown time and time and time again that it definitely does not do fine detail well and will often present shit that just 10000% was not in the source material, I still believe that it is right all the time and gives me perfectly clean code. it is them, not I, that are the rubes”

The problem with stuff like this is not knowing when you dont know. People who had not read the books SSC Scott was reviewing didnt know he had missed the points (or not read the book at all) till people pointed it out in the comments. But the reviews stay up.

Anyway this stuff always feels like a huge motte bailey, where we go from ‘it has some uses’ to ‘it has some uses if you are a domain expert who checks the output diligently’ back to ‘some general use’.

A lot of the “I’m a senior engineer and it’s useful” people seem to just assume that they’re just so fucking good that they’ll obviously know when the machine lies to them so it’s fine. Which is one, hubris, two, why the fuck are you even using it then if you already have to be omniscient to verify the output??

“If you don’t know the subject, you can’t tell if the summary is good” is a basic lesson that so many people refuse to learn.

Ahah I’m totally with you, I just personally know people that love it because they have never learned how to use a search engine. And these generalist generative AIs are trained on gobbled up internet basically, while also generating so many dangerous mistakes, I’ve read enough horror stories.

I’m in science and I’m not interested in ChatGPT, wouldn’t trust it with a pancake recipe. Even if it was useful to me I wouldn’t trust the vendor lock-in or enshittification that’s gonna come after I get dependent on aa tool in the cloud.

A local LLM on cheap or widely available hardware with reproducible input / output? Then I’m interested.

actually you know what? with all the motte and baileying, you can take a month off. bye!

Petition to replace “motte and bailey” per the Batman clause with “lying like a dipshit”.

Isn’t this a case of ‘the good bits are not original and the original bits are not good’. According to wikipedia it is from 2005.

various forms of equivocation

I don’t see what useful information the motte and bailey lingo actually conveys that equivocation and deception and bait-and-switch didn’t. And I distrust any turn of phrase popularized in the LessWrong-o-sphere. If they like it, what bad mental habits does it appeal to?

The original coiner appears to be in with the brain-freezing crowd. He’s written about the game theory of “braving the woke mob” for a Tory rag.

I fucking love how goddamn structured kym is ito semiotics and symbolism

it’s an amazing confluence that might not have happened if persons unknown didn’t care, but they did. and thus we have it! phenomenal

Does a sealion have bootlicker nature? Ugh.

What is up wit h the design of this website. There’s huge amounts of dead space.

The content though… it just reads like somebody who’s pretty angry. I do like the odd rant here and there, but this one misses the mark. “I Will Fucking Piledrive You If You Mention AI Again” was much more to my liking (despite the damn 3 column design).

sorry you don’t like anger! we’ll improve your lemmyverse experience

been a little while since we had A Programming Dot Dev here, I think. guess it was time

Protip: If you give a hackernews 25 candies, it evolves into a programmingdotdev.

@o7___o7 @froztbyte never feed them after midnight

are they allergic to water? I may have a solution if they’re allergic to water

sandworms on lemmy??

What is up wit h the design of this website. There’s huge amounts of dead space.

The content though… it just reads like somebody who’s pretty angry. I do like the odd rant here and there, but this one misses the mark. “I Will Fucking Piledrive You If You Mention AI Again” was much more to my liking (despite the damn 3 column design).

Perhaps because I paused to read a little of the Dunciad before continuing with this essay, I think many of its attacks lack the precision and wit to justify their viciousness. I’m generally sympathetic to the premise, but whatever its merits it does not compare all that well with Alexander Pope. Maybe there is more insight and entertainment yet to be derived from comparing ChatGPT itself to Dulness.

profound reasoning indeed, this way to the egress so you can contemplate further